This year, we’re heading to San Francisco for HumanX from April 6 through April 9, 2026. As the #1 AI conference, HumanX is built for the decision-makers and leaders deploying and scaling AI in the real world. We’re beyond excited to take part. Not only are we attending, but we’re also co-hosting curated spaces to […]

Author Archives: Massed Compute

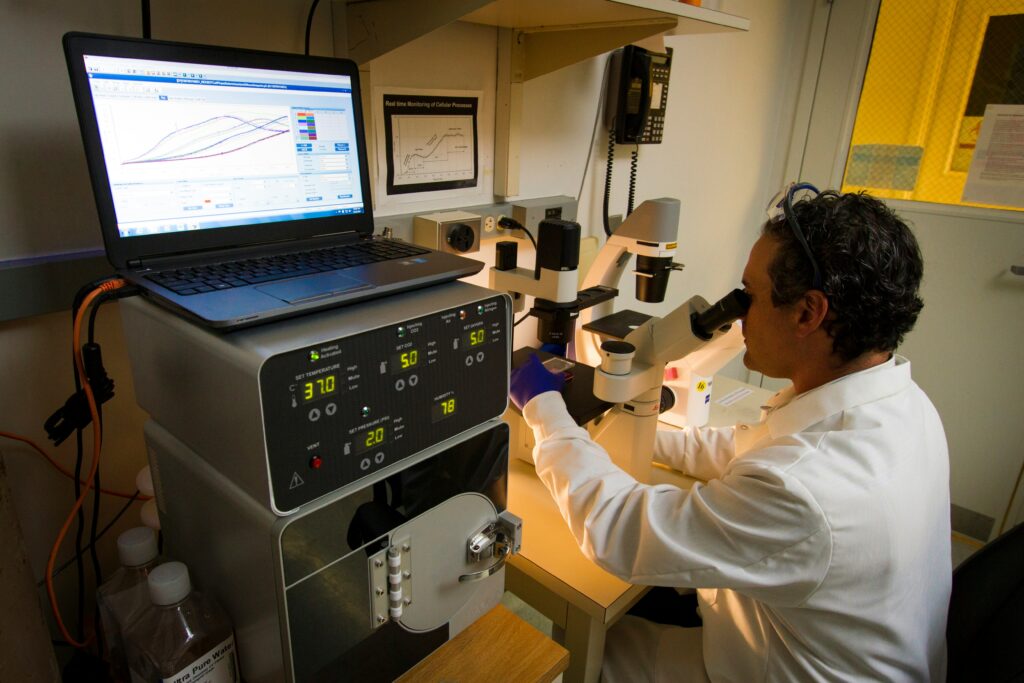

In the late months of 2022, the higher education sector was largely defensive. When ChatGPT first arrived, headlines were dominated by “plagiarism panics” and bans on generative tools. But stand on a university campus in 2026, and you’ll find that the “AI crisis” has been replaced by an “AI construction” boom. The narrative has shifted. […]

For more than a decade, public cloud platforms have transformed how enterprises deploy, scale, and manage infrastructure. The ability to access virtually unlimited compute resources on demand has enabled organizations to innovate faster and reduce the operational burden of managing physical hardware. However, as enterprise AI adoption accelerates and infrastructure costs climb, many organizations are […]

The real breakthroughs at NVIDIA GTC 2026 will be where the builders meet. This March 16-19 in San Jose, CA, we’re co-hosting three curated happy hour events designed for those shipping, not just pitching, the future of AI. Check out our events and sign up to join us for serious technical conversations, cold drinks, and […]

Modern enterprises face a critical infrastructure bottleneck as they compete for leadership in the AI sector. On one hand, the public cloud offers agility but often comes with variable costs and data sovereignty risks. On the other hand, traditional on-premises hardware ownership provides control but saddles IT departments with massive capital expenditures (CapEx) and the […]

Choosing the wrong infrastructure slows your research team down and quietly creates friction across your entire business. This is because costs creep up, timelines slip, and risk starts to pile on in places you didn’t expect. A lot of specialized “neoclouds” sound great on paper. Easy access to GPUs, flexible scaling, attractive pricing. But once […]

Graphics Processing Units (GPUs) are the workhorse of modern computational research, accelerating tasks that would otherwise take days or weeks on CPUs. When those GPUs are scarce, the ripple effects through university research programs are immediate and multifaceted: experiments slow or stop, inequities widen, budgets strain and innovation timelines stretch out. Here’s how GPU shortages […]

It is a new class of infrastructure designed for one purpose: turning large volumes of data into usable intelligence at scale. Predictions, automated decisions, real‑time recommendations, continuously improving models… these are the outputs of an AI factory. Unlike traditional data centers or general‑purpose clouds, AI factories are purpose‑built to run AI workloads as efficiently, predictably, […]

The “AI Race” is often portrayed as a hunt for silicon. Enterprises scramble to secure high-performance GPUs, believing that raw compute power is the sole gatekeeper to innovation. However, compute is only as fast as the network that feeds it. Traditionally, procuring high-end GPUs (GPU-as-a-Service) and configuring the massive pipes needed to move data (Network-as-a-Service) […]

Retrieval-Augmented Generation (RAG) has quickly become one of the most powerful patterns in modern AI engineering. By combining a large language model with a retrieval layer that pulls in relevant external knowledge, RAG enables applications to stay factual, context-aware and up to date without continually retraining the underlying model. But while RAG seems conceptually simple […]