Choosing the wrong infrastructure slows your research team down and quietly creates friction across your entire business. This is because costs creep up, timelines slip, and risk starts to pile on in places you didn’t expect. A lot of specialized “neoclouds” sound great on paper. Easy access to GPUs, flexible scaling, attractive pricing. But once […]

Author Archives: Massed Compute

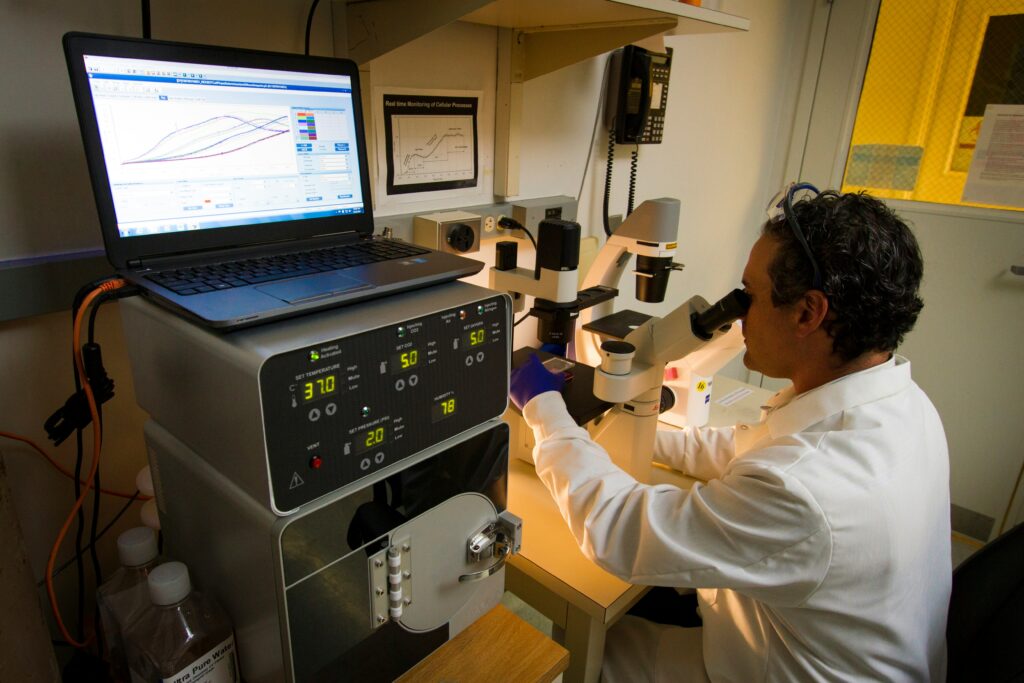

Graphics Processing Units (GPUs) are the workhorse of modern computational research, accelerating tasks that would otherwise take days or weeks on CPUs. When those GPUs are scarce, the ripple effects through university research programs are immediate and multifaceted: experiments slow or stop, inequities widen, budgets strain and innovation timelines stretch out. Here’s how GPU shortages […]

It is a new class of infrastructure designed for one purpose: turning large volumes of data into usable intelligence at scale. Predictions, automated decisions, real‑time recommendations, continuously improving models… these are the outputs of an AI factory. Unlike traditional data centers or general‑purpose clouds, AI factories are purpose‑built to run AI workloads as efficiently, predictably, […]

The “AI Race” is often portrayed as a hunt for silicon. Enterprises scramble to secure high-performance GPUs, believing that raw compute power is the sole gatekeeper to innovation. However, compute is only as fast as the network that feeds it. Traditionally, procuring high-end GPUs (GPU-as-a-Service) and configuring the massive pipes needed to move data (Network-as-a-Service) […]

Retrieval-Augmented Generation (RAG) has quickly become one of the most powerful patterns in modern AI engineering. By combining a large language model with a retrieval layer that pulls in relevant external knowledge, RAG enables applications to stay factual, context-aware and up to date without continually retraining the underlying model. But while RAG seems conceptually simple […]

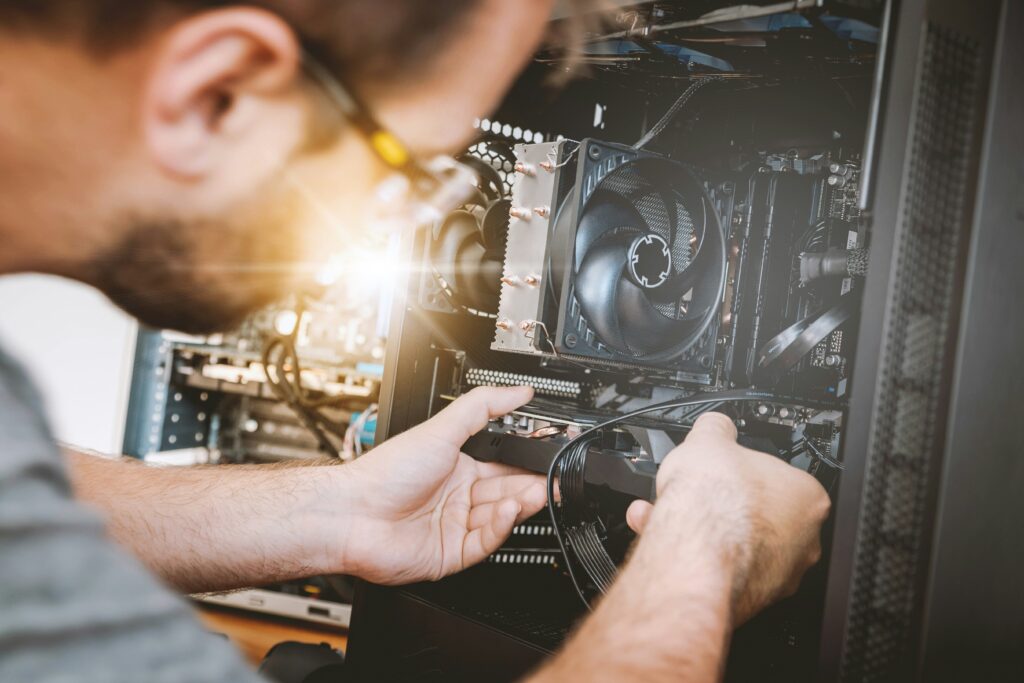

Your computer performs countless tasks simultaneously, from browsing social media and writing emails to running complex software. To handle these tasks efficiently, your system relies on different components, and one of the most critical is the GPU (Graphics Processing Unit). A GPU was originally designed to accelerate graphics rendering for video games. Today, it’s used […]

AI face replacement is the substitution or modification of faces using artificial intelligence (AI), and it’s been getting lots of attention in the last few years. Through VFX and rendering technology, you can rejuvenate an actor through digital de-aging, create digital doubles to perform stunt scenes, or even create AI-generated actors trained from thousands of […]

AI is a core component of how modern organizations operate, innovate and compete. From large language models (LLMs) and generative AI tools to real-time inference powering enterprise applications, the demand for high-performance compute has never been higher. Yet as AI adoption grows, many companies are hitting a familiar bottleneck when trying to access affordable, reliable […]

When we see names like Ampere, Lovelace, Hopper or Blackwell on graphics cards or AI accelerators, we might think they’re just marketing labels, the name of a scientific star meant to grab attention. But behind each of these generations lies a story: a major technological leap and a tribute to some of the brightest minds […]

Just a few weeks ago, the infrastructure of Amazon Web Services (AWS) suffered an outage that left businesses all over the world on standby. For hours, companies faced blocked queues, frustrated customers and lost sales. It was a reminder that we are dependent on digital infrastructure, and when a service fails, the impact can be […]